TTI Vanguard heightens intellectual thought about technological possibilities through a method they call ‘future-scan'. Future-scan refers to a focus on unanticipated sources for change and evaluating their transformative promise through highly interactive sessions and debate, stimulating breakthrough ideas.

The September 2017 conference invites members into discussions on Risk, Security, & Privacy. Dialogue is opened and enhanced by speakers heralded as thought-leaders in their industry. Additionally, a unique feature had been added to this year's conference; a private tour of the prestigious John Hopkins University's Applied Physics Laboratory.

Risk, Security, & Privacy

Risk and security can no longer be separated, if they ever could. In fact, at some companies, cybersecurity is being moved from its corporate silo into a broader basket of risk management.

An argument has been made that some companies neglect cybersecurity because the cost from being digitally attacked is still less than the cost to protect themselves with a proper cybersecurity strategy, which may not have been effective anyway. Thankfully, that view is too cynical for most organisations, but a realistic assessment that puts cybersecurity as just one element in a broad framework of costs, risks, and rewards.

Here we take a look at the Key Takeaways in Risk, Security, & Privacy that need to be managed - focusing on those introduced by computer systems, mobile devices, internet of things (IoT), and cloud computing. We also consider the tensions and trade-offs among security, efficiency, customer satisfaction, and privacy.

“SANDSTONE became members of TTI Vanguard to stay ahead of the curve and to find out what’s really going on, before it hits the news. We accomplish this by staying IN with Defence Contractors, Fortune 100 Company Leaders, and Division One Universities. Knowing the direction in which things are moving is the best way to Follow the Money.”

S. Watkins, SANDSTONE CEO

Interesting Facts and Hard Stats on our Key Takeaways

Hackability - How Easy we can be Hacked

Unwarranted data collection

Interesting Facts

Today almost everything is connected; your washing is ready - Ping - message to your phone, your baby is crying - Ping - message to your tablet with video feed, your pet's food is low - Ping. However, how safe are these digitally connected devices? Your computers and other conventional handheld devices are safe, with the protection already installed straight from the factory. It is the smaller devices or internet enabled applications on common household items that collect data when you think it would not or could not. Devices such as; smart lightbulbs, fridges with twitter enabled screens and digitally connected washing machines.

Hard Stats

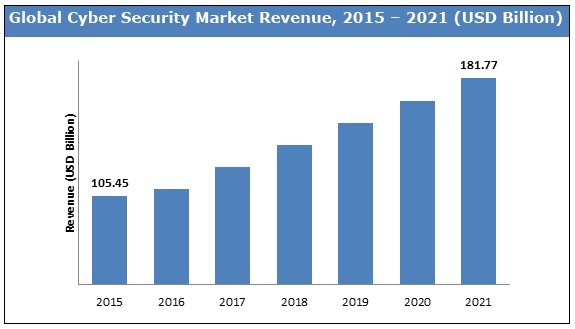

Cybersecurity continues to grow with the Internet of Things (IoT). Snowballing into the global Cybersecurity market and predicted to reach $181 Billion+ by 2021 - exponential growth for a date not far off. The chart below displays steady growth from 2015 until 2021.

Source: Zion Research

Cards with tap and chip

Interesting Facts

Devices have been developed by skilled hackers to steal debit and credit card information by simply standing next to you. It only takes small modifications to equipment to bypass the chip-and-PIN protections and enable unauthorised payments. These hacking chips steal your data and impersonate it when making a transaction. Demonstrations at TTI Vanguard had individual's data collected by thermal imaging - showing how easy it is to collect chip data and pin numbers without your knowledge.

Hard Stats

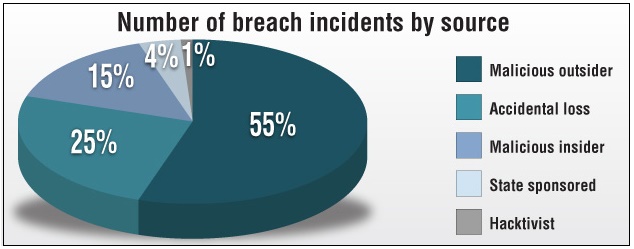

While stolen cards are being used less at ATM's and registers inside shops, they are increasing in online transactions - whether it is online shopping or digital money transfers. Credit card breach incidents by a malicious outsider reached 46% (of total breach incidents) in 2014, in 2016 card hackers had grasped new heights at 55% of total breach incidents.

Source: creditcards.com

Hacker schools and hacker towns

Interesting Facts

While Cybersecurity companies work to update systems protecting our information, hackers are equally educating themselves to steal or sell your data. Free courses are available online, teaching how to hack wifi networks and computer firewalls, but FBI and other security entities can track and report people using these sites. An increasing concern would be hacker towns popping up, where the citizens protect each other, and entire micro economies are built around hacking to steal money or sell data. One example would be a remote town in Romania that became ‘Cybercrime Central' - a term coined by WIRED Magazine.

Hard Stats

Ethical hackers can make up to six-figures working for technology companies, performing Penetration Tests on their digital security. One problem with these highly skilled individuals - their sociability in the workplace. Why show up to a 9-to-5 when you can be introverted at home, hacking unprotected systems for six-figures, if not more.

What is attracting highly skilled workers to cybercrime?

- Ability to work remotely - avoiding social interaction.

- No violence - online robbery is free of direct physical conflict.

- Silent crime - cybercrime is already hard to track, being only days behind in reporting missing or misused data has a hacker well ahead of your chance in discovering who they are and where your data or money is.

- Embarrassed businesses - some companies will absorb the loss from a hack without going public about it. They believe the cost of a hack is lower than the cost of preventing it. Another reason; avoiding the embarrassing press of being hacked and having consumers lose faith in the business.

- Criminal charges - sentences tend to be much lighter compared to their physical crime equivalent.

- Hacker criminal sentence example: Jeanson Ancheta, Hack Alias ‘Gobo' - Charges: Four counts of Fraud at a Federal level toward military computers- Sentence: 57 months in prison and a forfeit of his BMW vehicle. (USA)

- USA Federal Sentencing for one count of Fraud or Embezzlement: 6 years in prison. 7 years if the defendant has been convicted before - with a possible maximum term of 20 years.

The overlaying issue - these skilled workers lack the social ability to be recognised and hired.

Artificial Intelligence - Watching, Listening, Learning

Evolution of privacy

Interesting Facts

Siri on Apple, Cortana on Microsoft, AI on Google, Watson on IBM, and Alexa on Amazon - all are collecting data while making your life seemingly convenient. Convenient but not private. With every update we consent to, and with every ‘terms and conditions' we click yes to, we surrender our data. In turn, companies use this information for improved marketing and enhanced functionality. Unfortunately, this data is often abused or sold, allowing other businesses you are not affiliated with to sell to you.

While these machines learn to help you, others are learning to spy on you, some not even in secret. Website Shodan.io is an open search-engine style webcam site. Any webcam hooked up to the internet without security can be accessed from anywhere in the world, this goes back to the baby monitor example in our articles first section; ‘Hackability.' The worst part about this program; it is not a hack, if you have not protected your webcams, then you have left them open for public viewing.

Hard Stats - Speaking of hard stats, these are hard to obtain statistics.

The following companies go to great lengths protecting the amount of information and collection methods of the data they acquire on us.

Your Artificial Intelligence (AI) mic is always on. Always. These AI devices us ‘wake-words' to activate, or, ‘awaken', so their microphones must always be on to detect these wake-words. Examples of wake-words are Apples "Hey Siri", Googles "OK Google", or addressing Amazons Alexa by its name - all handsfree voice activations.

While it is unknown just how much data has been collected by listening AI, we do know it is the fastest growing data collection method to date. However, what do these companies do with all your data? The answer is simple: whatever they want. When purchasing these devices, you enter into a contract agreement, supplying your data for ‘better service'. Better service can be interpreted in a number of ways, depending on if you mind their in-depth tracking or not. Your data is entered into advanced algorithms designed to market products specifically to you, or supplied (in some countries by law) to the government when asked, or even sold to other companies.

Outside of AI collecting data, it is worth noting that seemingly harmless apps, such as your flashlight app, can do more than their basic function. Have a look at your uncomplicated apps that perform small functions, if they are over 200KB; they are doing more than their intended function.

Digitally Connected - Humanly Disconnected

Your new babysitter: Alexa

Interesting Facts

As Amazon's Alexa slowly enters homes across North America, people are using the AI for more than just turning their lights on and ordering pizza. They are using the machine to babysit. Alexa's Al can learn what your children like to watch on TV, where they are in the house, tell them jokes and interesting facts, and even read them a story. So naturally, or unnaturally, parents are using Alexa as a babysitting substitute to the human equivalent.

Hard Stats

Safewise, a home security site, lists Amazon's Alexa as the number one home automation device for toddlers. Safewise's reasons; potty-training and bedtime reminders, storytelling, and TV/Music control. Hopefully, we do not need to point out the social implications this can have on a child.

"Nice to meet you, my name is <BOT NAME HERE>"

Interesting Facts

Bots are taking over, and not entirely in a bad way. Yes, they are combing the internet to scam you with newer and trickier methods, but they also appear in friendly(ish) ways; greeting and assisting while you shop online, or when checking online banking. Bots also provide social and emotional support on hotlines or dating sites and soon will be writing books and creating classical art so effectively that humans will have a hard time competing. When a Bot knows everything you like to read and view, how can one compete? How does this affect society, and when do we draw an ethical line in the sand? Some view creative-bots in a positive light, bringing us well-written books and beautiful paintings, customised precisely to your taste, others see the job losses and the lack of humanity in art.

Hard Stats

Bots are popping up everywhere; The Atlantic reports that 52% of web-traffic today has you interacting with either a good or bad Bot, whether you are conscious of it or not.

Most new or updated websites today use Bots to greet you upon visiting them, answering and recording your questions for assistance and the companies research.

Unfortunately, not all Bots are here to help. Bot developers have created programs to manipulate emotions and spread fake news.

Notable cases of harmful bots

- 48 Million Twitter users are Bots, with even more accounts being humans luring in followers only to replace themselves with Bots once you are convinced.

- Facebook and dating apps like Tinder have been densely populated with Bots, mainly using emotional manipulation to bait insecure individuals into buying them (their human developers) items or participating in expensive online social interactions or chat-groups.

- Unfortunately, users manipulated by bots on social platforms rarely care or enjoy the attention - fake news articles spread by Bots are continuously spread by real people, even when they know the news is false and generated by a Bot.

The Missing Morality

Ethics, or the lack of

While the conference is incredibly innovative and informative, ethics was not touched on. Mentions of social responsibility and ethical use of technology were nowhere to be found. Why is it important to take notice of this as an investment company? Ethical use of technology has significantly slowed or completely stonewalled some companies progress. If a business is operating unethically, there is a good chance they will be stopped. Although, this is not always the case; it will take time for laws in ethical technology usage to catch up with unethical practices today - such as the relentless data collection by Google.

While the law catches up with online ethics, so do our universities. A computer science degree from Carnegie Mellon University has a total of fifty courses; three in cybersecurity and zero in ethics.

An observation in digital ethics that leaves us with a few questions: How do you govern the internet? Who would be permitted to regulate it? Who decides what is right and wrong?

Social consequence

As we become increasingly lost in our phones, and with AI beginning to raise our children at their vulnerable ages, our social disconnect widens. Social disconnect caused by technology can and will have extreme effects on entire countries, one notable effect seen today is in Japan. Japan is experiencing an age-gap, a new generation of is not developing as fast as the present generation is ageing. The present generation is not dating, or reproducing - they lack common social communicating abilities due to interacting digitally, even further - there are now machine ‘aides' making the reproductive drive less of a focus.

So, with all this advanced technology what is the ethical solution? Well, it is surprisingly simple: Slow down. Life is not about algorithms and a consent quest for convenience. Talk to each other, communicate in person, do not lose in one generation what took thousands of generations to build.

Bottom Line

The purpose of SANDSTONE'S membership with TTI Vanguard is to stay ahead of the curve. The September conference focused on one of our core investment themes; Global Instability - which generates the rising need for Cybersecurity. At TTI Vanguard we rub shoulders with thought-leaders while tracking, researching and developing speculation on the next big players in Cybersecurity - a billion dollar industry, with immense local and global opportunities.

Cybersecurity Hard Stats

- Cybercrime damage costs are forecast to hit $6 Trillion annually by 2021.

- Cybersecurity spending may exceed $1 Trillion from 2017 to 2021 - hardly equal spending compared to the Cybercrime damage

- Cybercrime will more than triple the number of unfilled Cybersecurity jobs - predicted to reach 3.5 million by 2021

- Global ‘ransomware' (holding your data hostage) has already hit $5 Billion in 2017 and is expected to increase before years end

Just the Beginning - Future 2017 TTI Conferences

- December in San Francisco: [NEXT]

- Topics - ageing populations, scarcity of natural resources, climate change, and continued urbanisation, globalisation, and virtualisation. Additional subjects - bioengineered supercomputers; sensors, satellites, big data, and a view of artificial intelligence. Find out what is [NEXT].

- March in L.A: Designing and Doing

- Topics - technology usable, notably in speech recognition and generation, image processing, and mobile interfaces. Additional subjects - real-time use of sensors, big data, machine learning, and the digital cloud.

- June in New York: Intelligence, Natural and Artificial

- Topics - Machine learning has the capability of going its own way, whether it is Facebook bots inventing their own language or swarms of drones making collective decisions that can't be attributed to any one node.

We remain focused on companies positioned to capitalise on exponential growth technologies and Cybersecurity.

Source: ZionMarketResearch, CreditCards.com, CSOonline, Safewise, Forbes, Wiki, WhiteCollarCrimeResources